《机器学习实战》第5章—预测病马案例(Jupyter版基于sklearn的Logistic回归)

作为一个机器学习未入门的小白,要先老老实实的做一个调包侠。

·

目录

作为一个机器学习未入门的小白,要先老老实实的做一个调包侠。

一、导入第三方库

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split二、数据预处理

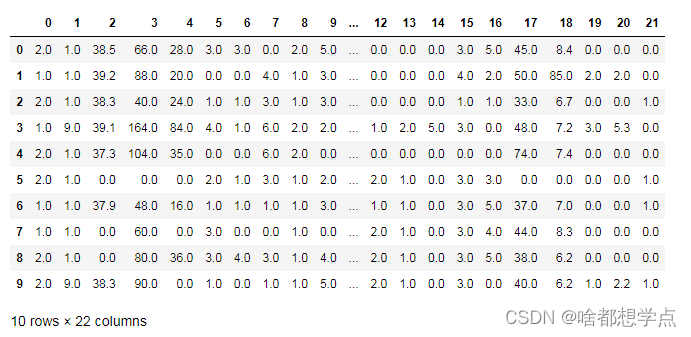

2.1 读取数据

txt数据是比较“纯净”

data_train = pd.read_csv("horseColicTraining.txt",header=None,sep='\s+')

data_test = pd.read_csv("horseColicTest.txt",header=None,sep='\s+')

data_train[:10]

2.2 划分特征集合标签集

# 对 horseColicTraining 中的数据划分

data_train_feature = np.array(data_train)[:,0:21]

data_train_labels = np.array(data_train)[:,21]

# 对 horseColicTest 中的数据划分

data_test_feature = np.array(data_test)[:,:21]

data_test_labels = np.array(data_test)[:,21]

三、建立模型

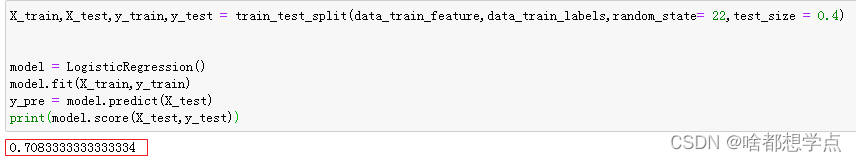

3.1 对训练集中的数据再次划分

X_train,X_test,y_train,y_test = train_test_split(data_train_feature,data_train_labels,random_state= 22,test_size = 0.4)

model = LogisticRegression()

model.fit(X_train,y_train)

y_pre = model.predict(X_test)

print(model.score(X_test,y_test))

3.2 用 test 数据进行验证

model.predict(data_test_feature)

print(model.score(data_test_feature,data_test_labels))

# 运行结果:0.746268656716418PS:

LogisticRegression()函数中,还有很多参数,但是作为一个小白,还没有完全弄明白。以后涉及到了在梳理吧。

LogisticRegression(

penalty='l2',

*,

dual=False,

tol=0.0001,

C=1.0,

fit_intercept=True,

intercept_scaling=1,

class_weight=None,

random_state=None,

solver='lbfgs',

max_iter=100,

multi_class='auto',

verbose=0,

warm_start=False,

n_jobs=None,

l1_ratio=None,

)更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)